ML-SIM : Universal reconstruction of structured illumination microscopy images using transfer learning

Abstract

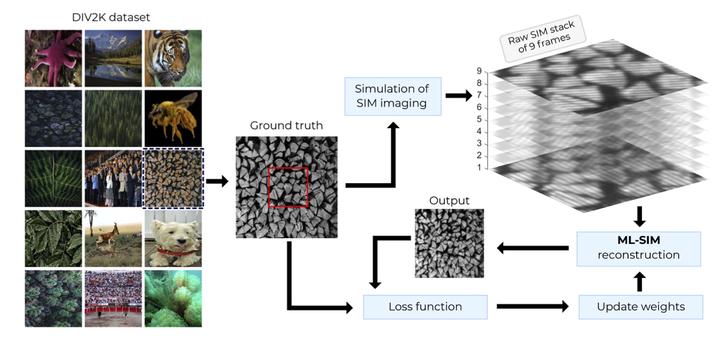

Structured illumination microscopy (SIM) has become an important technique for optical super-resolution imaging because it allows a doubling of image resolution at speeds compatible with live-cell imaging. However, the reconstruction of SIM images is often slow, prone to artefacts, and requires multiple parameter adjustments to reflect different hardware or experimental conditions. Here, we introduce a versatile reconstruction method, ML-SIM, which makes use of transfer learning to obtain a parameter-free model that generalises beyond the task of reconstructing data recorded by a specific imaging system for a specific sample type. We demonstrate the generality of the model and the high quality of the obtained reconstructions by application of ML-SIM on raw data obtained for multiple sample types acquired on distinct SIM microscopes. ML-SIM is an end-to-end deep residual neural network that is trained on an auxiliary domain consisting of simulated images, but is transferable to the target task of reconstructing experimental SIM images. By generating the training data to reflect challenging imaging conditions encountered in real systems, ML-SIM becomes robust to noise and irregularities in the illumination patterns of the raw SIM input frames. Since ML-SIM does not require the acquisition of experimental training data, the method can be efficiently adapted to any specific experimental SIM implementation. We compare the reconstruction quality enabled by ML-SIM with current state-of-the-art SIM reconstruction methods and demonstrate advantages in terms of generality and robustness to noise for both simulated and experimental inputs, thus making ML-SIM a useful alternative to traditional methods for challenging imaging conditions. Additionally, reconstruction of a SIM stack is accomplished in less than 200 ms on a modern graphics processing unit, enabling future applications for real-time imaging. Source code and ready-to-use software for the method are available at http://ML-SIM.github.io.

Get the source code at GitHub.